Gavino

Members-

Posts

15 -

Joined

-

Last visited

-

Days Won

5

Everything posted by Gavino

-

+1 for enabling Nakivo to work with XCP-NG/XOA. I really want to get off the vSphere platform now that Broadcom keep tightening the screws. By the looks of things, there are a lot of people in the same boat (life raft!). This is a huge business opportunity for Nakivo to get the early mover advantage on XCP support, and would help Nakivo keep their existing user base of those users leaving, or looking to leave, VMware.

-

Standard install of Nakivo 10.5 on Ubuntu 20.04 server here. I had to run the command with the "-q" rather than "q" or else I had the same error as Bedders. Seems to have done the job... I copied the file to *-original" first and can see that the jar file has now shrunk: root@nakivovm:/opt/nakivo/director/libs# lsb_release -a No LSB modules are available. Distributor ID: Ubuntu Description: Ubuntu 20.04.3 LTS Release: 20.04 Codename: focal root@nakivovm:/opt/nakivo/director/libs# pwd /opt/nakivo/director/libs root@nakivovm:/opt/nakivo/director/libs# ls -la | grep log4j-core*.jar -rw-r--r-- 1 root root 825339 Dec 16 18:50 log4j-core-2.2.jar <<<< now smaller. -rw-r--r-- 1 root root 826732 Dec 16 18:49 log4j-core-2.2.jar-original Am wondering if I have to do this on my Transporter virtual appliances as well?

-

Hi Nakivo. Can I please have Nakivo developers state whether Nakivo needs patching for this, or is it fine? https://www.cve.org/CVERecord?id=CVE-2021-45046 I have updated the Nakivo Director and all transporters to the latest versions. Thanking you.

-

Thanks for the info. I'll wait for 10.5 before upgrading

-

Hi. When is it planned Vmware 7 update 3 support?

-

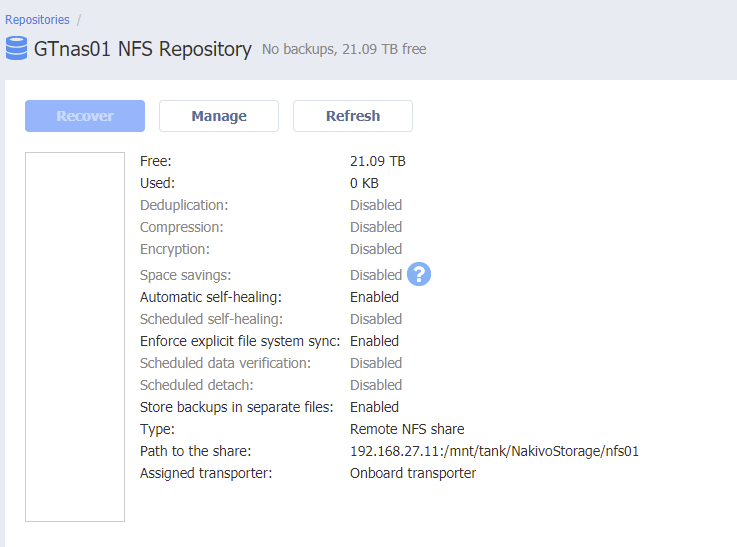

Thanks for the info. I have stuck with not enabling the storing of backups in seperate files because I'm a little worried about increased space requriements, and because I rely on NFS, which I don't believe is supported for immutable recovery points. I am using a ZFS back-end for my NFS repository, and have good quality SAS drives, ECC RAM, as well as regular ZFS pool scrubs and S.M.A.R.T. checks (outside of backup times). A key feature I've enabled is syncrhonous writes ("enforce explicit filesystem sync") as I've read a lot about how important that is to keep data from corrupting. My recommendation would be for your product to set this on as the default, telling customers to disable it only if they have a battery-backed write cache for their storage, or if they suffer crippling speed issues (I would recommend different NAS hardware if that is the case!) My speeds are still fine with explicit filesystem sync enabled. I also have the NAS on two high-quality UPS units (one per power feed), which do double power conversion; active PCF; and pure sine wave output. My CPUs also have extremely good thermal paste. All firmware is up to date. I'm pretty sure I've done everything in my power to not get any repository corruption. Will see how it goes after several months of service. If I ever get corruption I'd be looking next towards storing backups in seperate files. So far so good though

-

10.4 on Ubuntu 18.04 introduces serious NFS repository issues

Gavino replied to Gavino's topic in General threads

There were mistakes on my end - I didn't realise I was reading out-of-date documentation, as the documentation only seems have version number on the front page, and I arrived at the info via direct link of a Google search. Even with the most current documentation, I think many long-term users will struggle to understand fully what is going on with these changes. Thanks for following up. -

10.4 on Ubuntu 18.04 introduces serious NFS repository issues

Gavino replied to Gavino's topic in General threads

Thanks for the link Mario. For my use I still really like global deduplication, for the space/cost savings, and indeed it might be necessary given my storage space requirements. I've recently upgraded from an older Dell R710 6-disk setup to a newer Dell R730 8-disk setup, and switched from SATA to SAS drives. I definitely recommend SAS over SATA - especially for VM Flash Boot and Screenshot Verification. It's worth the extra $$ IMHO. The firmware quality is usally better too, as is the SMART reporting. Previously with SATA I found it too slow to have "Enforce explicit file system sync" on. The SATA bus is only half-duplex, and only 6Gbps, vs the full-duplex SAS3 12Gbps. Now with my new setup I have exlicit filesystem sync turned on and still get about 1.2-1.5Gbps speeds, which I'm happy with. It was terrible on the old system so had it off there. With my old setup (without filesystem sync) I did have one corruption on a single VM which has about 2TB of data - a Flash VM Boot would always come up with disk errors and would take forever to try and fix those on boot with checkdisk, which meant Screenshot Verification wouldn't work for that one, but my other 8 VMs were fine. My main repo is now a high quality TrueNAS Core 12.0-U5 ZFS setup with enterprise SAS drives and ECC buffered RDIMMs, and the ZFS dataset shared via NFS to my Nakivo Director on Ubuntu 18.04 respects the explicit filesystem sync setting set by Nakivo. I have everything on power conditioning UPS, so hoping I don't have any further repo corruptions. I put a 2TB NVMe drive as L2ARC for my ZFS pool, so hoping that gives a nice speed boost to the VM Flash Boot and Screenshot Verification, even after months of backups (and dedup complexity). Another thing I did was turn off compression for Nakivo, as my NFS datastore uses LZ4 ZFS compression natively, so avoiding double-compression issues. Now that Nakivo only has to worry about dedup, and not dedup + compression, I am hoping that my new setup will be super reliable, even with global dedup on. I really think global dedup is still fine to use from a reliablity standpoint, but you just have to manage the setup with more care (quality ECC RAM, quality UPS, high quaity (SAS) drives, high quality drive controller, filesystem sync, optimal compression setings, keep the BIOS and controller+drive firmwares up to date etc). Anyway I'll see how I go. -

Thanks for the info . What's strange is that "Deduplication" and "Store backups in separate files" are both listed as repository parameters at the same time - when really they are the negative of each other aren't they? Like - it's not possible to have both be enabled, or both be disabled, is it? It's like you haven't introduced a new feature, but just added another name for an existing one, greatly confusing people in the process. I suspect "store backups as separate files" (aka no deduplication) would take up too much space. But I also find that global deduplication is risky, for the reliability reasons you've listed above. What I was hoping you were doing with this new feature, is to have per-VM deduplication, rather than global. That way if there is any corruption, then it would/should only affect a single VM, and I could just start backing up that VM from scatch again, rather than blowing everything away - which takes a long time to do for that inital backup (I can only start that on a Saturday afternoon). To me, that's a great trade off between global dedup vs no dedup. I was really hoping this new feature would be "store VM backups seperately" with the option to still have deduplication on (on a per-VM basis).

-

10.4 on Ubuntu 18.04 introduces serious NFS repository issues

Gavino replied to Gavino's topic in General threads

OK I worked out that "Store backups in separate files" is the exact same meaning as "no deduplication". If I am wrong - then please let me know. Nakivo should have just kept it simple by keeping the "deduplication" wording and not confuse people with another option that means the same thing. It's a bit crazy to look at a repository after it's been created, and see "Deduplication" and "Store backups in separate files" as completely separate line items (which are far apart). Both refer to the same thing. I bet there is a product designer pointing his finger at a collegue right now going "see, I told you that customers would not like this!". And they would be right. It's pretty strange that for this feature, the new docs are now saying "Enabling this option is highly recommended to ensure higher reliability and performance" WHAT?! Dedup was one of the main reasons I bought Nakivo! https://www.nakivo.com/vmware-backup/#benefits - this talks up dedup as a benefit and cost saving. Nowhere in the sales pitch do you say that it's highly recommended not to use it!! It's interesting to note also that deduplication and immutable backup respository are incompatible with each other, as I found out from emailing tech support. Some feedback on documentation. Nakivo should put 10.3 or 10.4 (or whatever the version is) at the top of each documentation page. That would be very handy. I thought I was reading the latest version when I posted above, as the Release Notes page mentioned 10.4. I was like... ok this looks like I'm in the right place for the latest info as it lists 10.4. https://helpcenter.nakivo.com/display/RN/Release+Notes I didn't realise until after contacting tech support, that it was the older documentation. Also, the images zoom on the 10.3 docs but not 10.4. I prefer zoom, as sometimes the info is unreadable until it's zoomed in. Please fix this in the 10.4 docs. Thanks. An issue I found here: https://helpcenter.nakivo.com/User-Guide/Content/Deployment/System-Requirements/Feature-Requirements.htm#Ransomware ------ quote For Local Folder type of Backup Repository, the following conditions must be met: The Backup Repository data storage type must be set to Incremental with full backups. ----- end quote Whoever wrote that maybe doesn't realise that "incremental with full backups" doesn't exist as an option any more in 10.4, like it did in 10.3. I have been told in an email that "...there is the option "store backups in separate files". When this option is selected, the repository is "Incremental with Full Backup" and Immutable is supported." It would be handy if you explained that in your documentation. The line above sould probably say The Backup Repository data storage type must be set to "store backups in separate files" (i.e. deduplication switched off). This implicitly sets the data storage policy to Incremental with full backups. -

Are you using any Nakivo-specific plugins for Check_MK, or just the enabling SNMP on the node hosting Nakivo, and polling that? I use Check_MK as well, but don't have anything specific for Nakivo. I'm just checking connectivity to the host its on, and disk space etc. Thanks.

-

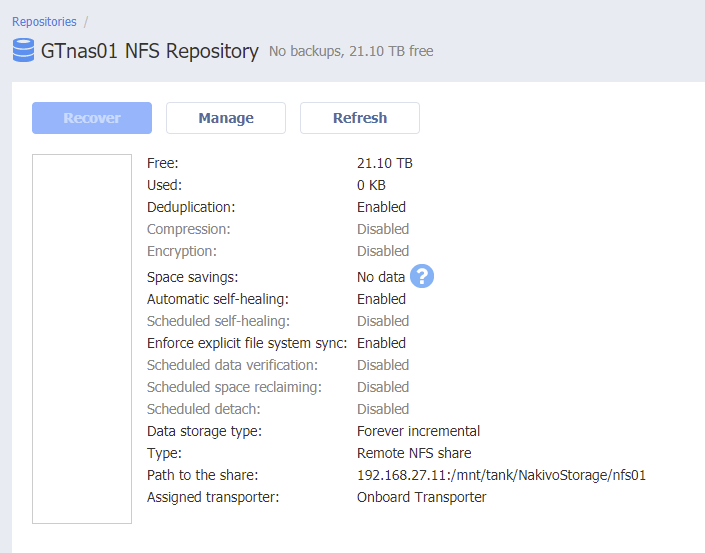

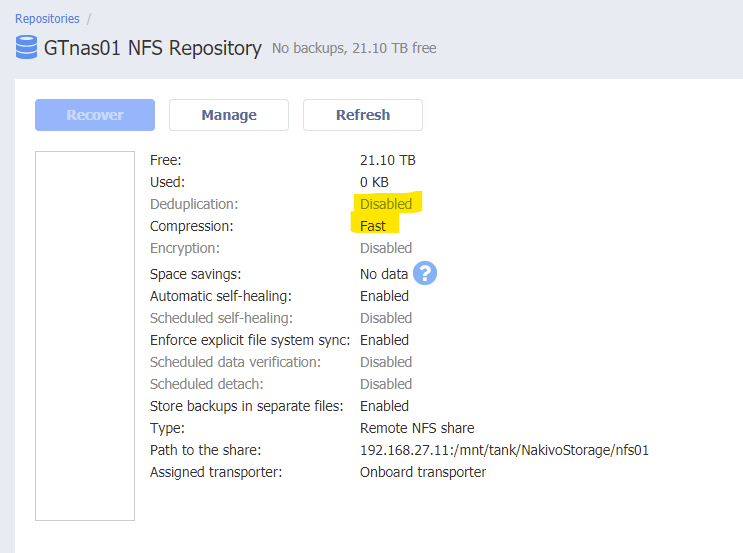

I have a fresh install of Ubuntu Server 18.04 with nothing but the following done to it before installing Nakivo "NAKIVO_Backup_Replication_v10.4.0.56979_Installer-TRIAL.sh" sudo apt update sudo apt install nfs-common sudo apt install cifs-utils sudo apt install open-iscsi sudo apt install ntfs-3g I installed Nakivo with flags " --eula-accept -f -C" since I am using NFS instead of onboard repository. You can see in 10.4 that multiple fields are missing. Where is the data storage policy ("Forever incremental" etc)? Where is the deduplication option? I don't want compression in Nakivo as my TrueNAS storage does LZ4 compression already. Even though Data Size Reduction is set to Disabled, it's still telling me that fast compression is set (even though I toggled that to Disabled, before setting Data Size Reduction to Disabled). If I experiment and turn the Data Size Reduction" to Enabled, I am then able to change compression to Disabled and have that stick, but still - deduplication remains off, and that matters greatly to me. Still no idea what my data storage policy is. I uninstalled 10.4 and installed 10.3 on the same box, and that is behaving correctly. Compare the screenshots. 10.4 is a hot mess for NFS right now and I simply cannot use it. You broke login wallpapers in 10.3, which was annoying, but this is simply horrible. Where are your testing procedures? Support bundle from 10.4 has been sent. I am running 10.3 now so you'll have to replicate in your lab, which should be easy to do.

-

The support bundle link gives "Page Not Found". The URL has an extra ")" at the end.